In the last two posts, we’ve focused on Test Levels. We got the knowledge about what are they and how to use them in Software Development Lifecycle.

In this post we will take a note on Test Types.

But we need to answer the question:

What are the Test Types?

Test Types are the group of the test activities, and their goal is testing the specific characteristics of the Software system or their part based on the specific test purposes, inter alia evaluating functional quality characteristics (e.g., completeness, correctness, and appropriates), non-functional quality characteristics(e.g., reliability, performance efficiency, security, compatibility, and usability), evaluating whether the structure or architecture of the component or system is correct, complete, and as specified or evaluating the effects of changes (e.g., confirming that defects have been fixed and looking for unintended changes in behavior resulting from software or environment changes).

We ought to take note that we can execute every test type on every test level.

So now we can tell ourselves something more about the test types and we will present ourselves the three test types: Functional, Non-functional, White-Box, and Change-related testing.

Let’s start from the first one, Functional testing.

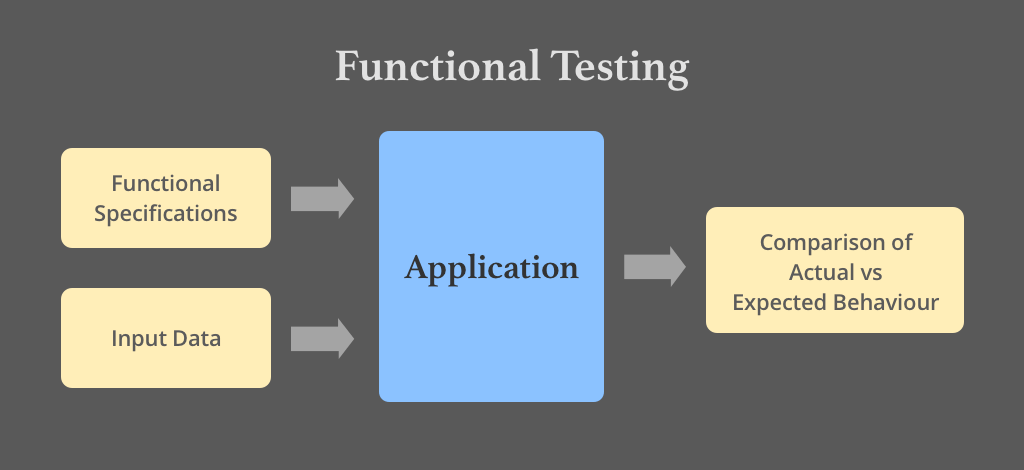

Functional testing of a system involves tests that evaluate functions, what this system should perform. We ought to describe these requirements in the work products (like epics, user stories, use cases, business requirements specification, or functional specifications) or can be also undocumented. We need to conduct the functional tests on every test level and we may use here the Black-Box techniques, which we can use to derive test conditions and test cases for the functionality of the component or system. It can be measured by functional coverage, which is the extent to which tests perform the specific functionality (express in percentages). Functional test design and execution may involve special skills or knowledge (knowledge of the particular business problem the software solves).

Let’s take a look on the example below.

Our tester R gets the latest version of the created application to conduct the functional tests. This is a simple system for hotel room booking. Our R starts the process by checking the user story to better understanding what requirements the application needs to fulfill. From the user story, our R knows the user needs to can book the hotel room by inter alia, looking for the hotel room by using the search function, choose the room and the best options, and make a payment. Before designing the test cases R needs to know how the tourism business model works to test properly the application in business prospect. Our R after this activity starts to design the test cases and execute them. When R input the test data and gain the actual result, compare them to the expected results.

As we can see from the example above, the knowledge of the particular business problem the software solves is very important to perform good functional testing.

The second test type is Non-functional testing.

Non-functional testing of a system evaluates characteristics of systems and software such as usability, performance efficiency, or security. Refer to ISO standard (ISO/IEC 25010) for a classification of software product quality characteristics, for instance. We can and often should conduct the functional tests on every test level and do it as early as possible. We may use the Black-Box techniques for the same purpose as in functional testing we’ve used that. Testers can measure the thoroughness of non-functional testing by non-functional coverage. That is the extent to which tests perform the specific non-functional element (express in percentages).

For example, we’re using traceability between tests and supported devices for a mobile application. The percentage of devices that addresses by compatibility testing can be calculated, potentially identifying coverage gaps.

Non-functional test design and execution may involve special skills or knowledge (knowledge of inherent weaknesses of a design or technology).

Let’s see the story.

After the functional tests, our R needs to conduct the non-functional tests of the application. In that case, he needs to test the application performance (loading pages time, response time, etc.), security (e.g., resistance the page from the XSS attacks, SQL injection, Hijacking attacks), compatibility (if the application works properly on different types of devices and browsers), usability (e.g., the interface of the application is user-friendly), localization (if the map is good working, the inputted data shows the correct places, etc.), reliability (if the application specified functions work properly despite the failures), etc.

This story shows, that it’s really important to have appropriate knowledge for conducting non-functional testing.

The next test type is White-Box testing.

White-box testing derives tests based on the system’s internal structure or implementation. For instance, the internal structure may include code, architecture, workflows, and/or data flows within the system. The thoroughness of white-box testing can be measured through structural coverage, which is the extent to which the tests perform some type of structural element (express as a percentage). At the component integration testing level, white-box testing may be based on the architecture of the system. White-box test design and execution may involve special skills or knowledge, such as the way the code is built, how data is stored and how to use coverage tools and to correctly interpret their results.

Let’s consider the example.

This time, our R needs to test the code of the application. The first thing that our R needs to do is understanding the source code. He ought to look at the code, read it carefully, and look for potential failures. He should look for security issues to prevent attacks from hackers and naive users who might inject malicious code into the application either knowingly or unknowingly. After that R starts to create the test cases and execute them. The test cases to white-box testing are designed by writing more code that tests the developer’s code. After that R compares the actual results with expected results.

The example above shows, how important is to have good knowledge to conduct the white-box testing.

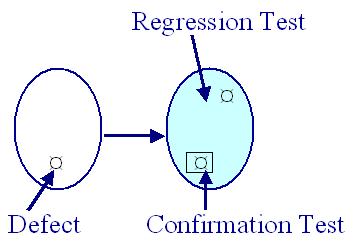

The last test type is the Change-related testing.

We should conduct the change-related testing when the team fixed the issues in the system or create/change the new functionality to confirm that works properly or not causes any unforeseen adverse consequences. We have two varieties of change-related testing here. The first is the confirmation testing.

We execute the confirmation testing when a defect is fixed. We ought to test the software using all test cases that failed due to the defect and we should re-execute them to the new software version. The purpose of a confirmation test is to confirm whether the original defect has been successfully fixed. The second is regression testing.

We execute the regression testing when is possible that the change made in one part of the code (whether a fix or another type of change) may accidentally affect the behavior of other parts of the code (whether within the same component, in other components of the same system, or even in other systems). Their purpose is to run tests to detect such unintended side-effects.

We can perform the confirmation testing and regression testing at all test levels. Regression test suites are run many times and generally evolve slowly, so regression testing is a strong candidate for automation. Automation of these tests should start early in the project.

Let’s see the story.

Our R is after the functional, non-functional, and white-box testing. Everything was fine, almost. Our R found a few bugs, which were reported to the developers. Developers fixed the bugs and provided the fixed version to retest. R retested the application and closed the issue report. The next day R got the next version of the application feature to test. It was an improved feature to look for the rooms using the map. R conducted the regression tests and saw the unintended side-effect, which reported to the developer. The developer fixed that, R retested that and closed the ticket.

As we can see from the story, it’s important to execute the change-related testing.

We’ve got a lot of knowledge about the test types.

We ought to focus on maintenance testing as well.

Maintenance testing is executed once deployed to production environments, software, and as a result, systems need to be maintained. Software always changes to fix the defects detected during use, adds the new functionality (e.g., regression testing), or deletes the functionality already delivered. It needs maintenance as well to preserve or improve non-functional quality characteristics of the component or system over its lifetime, especially performance efficiency, compatibility, reliability, security, and portability. Maintenance can involve planned releases and unplanned releases (hotfixes).

A maintenance release may require maintenance testing at multiple test levels, using various test types, based on its scope. The scope of maintenance testing depends on:

- The degree of risk of the change (e.g., the degree to which the changed area of software communicates with other components or systems)

- The size of the existing system

- The size of the change

There are several reasons why software maintenance, and thus maintenance testing, takes place, both for planned and unplanned changes.

We can need modification of system, such as planned enhancements (e.g., release-based), corrective and emergency changes, and changes of the operational environment (such as planned operating system or database upgrades). It’s also possible that we will need to migrate the system, such as from one platform to another, which can require operational tests of the new environment as well as of the software is changing, or tests of data conversion when data from another application will be migrated into the system is maintained.

Migration includes retirement (when an application reaches the end of its life – when the application or system retires, this can require testing of data migration or archiving if long data- retention periods are required) and testing restores/retrieve procedures after archiving for long retention.

We got the information about the maintenance testing, but it’s not all.

We need to know also that the really important process, especially in maintenance testing is impact analysis. Let’s say about it.

Impact analysis evaluates the changes that were made for a maintenance release to identify the intended consequences as well as expected and possible side effects of a change, and to identify the areas in the system that will be affected by the change.

It can also help to identify the impact of a change on existing tests. We need to test the side effects and affected areas in the system for regressions, possibly after updating any existing tests affected by the change.

We can execute impact analysis before deploying the change to help decide if the change should be made, based on the potential consequences in other areas of the system.

The impact analysis process may be difficult if:

- Specifications (e.g., business requirements, user stories, architecture) are out of date or missing

- Test cases are not documented or are out of date

- Bi-directional traceability between tests and the test basis has not been maintained

- Tool support is weak or non-existent

- The people involved do not have domain and/or system knowledge

- Insufficient attention has been paid to the software’s maintainability during development

For instance, the team needs to migrate the application to the new system, because the old one has weak security. They need to archive the application database during this action for security purposes. After this, they got the next application to modification. This time it was a planned modification with a release date. Developers coded the solutions. Testers tested the application and they could deploy the modified version to production.

We got a lot of information about the test types and maintenance testing.

I hope you enjoyed reading this article.

The next post will arrive soon 🙂

The part content of this post was based on ISTQB FL Syllabus (v. 2018).

Graphics with hyperlinks used in this post have a different source, the full URL included in hyperlinks.