Some time ago, a long time ago I wrote a post about Software Development Lifecycle (SDLC). We explained there what the SDLC is, why is that important in Software Testing. We also presented to ourselves the SDLC models and explained them. In this post we will focus on SDLC as well, but this time from a Performance Testing perspective.

What are the principal performance testing activities during the test process?

Performance testing is iterative in nature. Every test provides valuable insights into application and system performance. Moreover, we use the information, which we gathered from one test to correct or optimize application and system parameters. Then the iteration will show the results of modifications, and so on until we reach the test objectives.

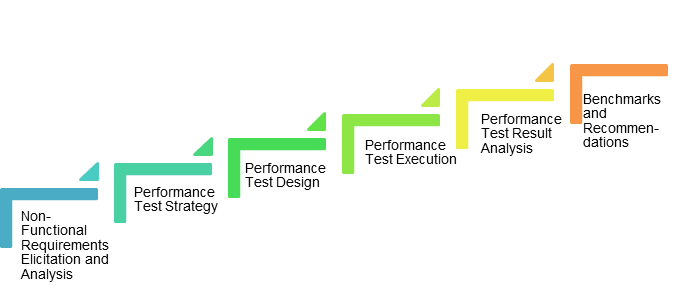

We will present the principal performance testing activities below.

Test planning is particularly important for performance testing due to we need for the allocation for example test environments or test data. In addition, we establish the scope of performance testing. During test planning, we perform risk identification and risk analysis activities, and update the relevant information in any test planning documentation (e.g., test plan).

In Test Monitoring and Control we define the Control measures to provide action plans should issue be encountered which might impact performance efficiency, such as increasing the load generation capacity, we change, add new, or replace hardware. Moreover, we do some changes to network components and software implementation.

We evaluate the performance test objectives to check for exit criteria achievement.

In Test Analysis, effective performance tests are based on an analysis of performance requirements, test objectives, Service Level Agreements (SLA), IT architecture, process models, and other items that comprise the test basis. We may support this activity by modeling and analysis of system resource requirements and/or behavior using spreadsheets or capacity planning tools. We identify the specific test conditions such as load levels, timing conditions, and transactions to be tested. Then, we decide about the required type(s) of performance tests (e.g., load, stress, scalability).

During Test Design, we design performance test cases. We create them in modular form so that we can use them as the building blocks of larger, more complex performance tests.

In the Test Implementation phase, we order performance test cases into performance test procedures. These procedures should reflect the steps normally that the user takes. It should also reflect other functional activities that we cover during performance testing. During this phase, we establish and/or reset the test environment before each test execution. Since performance testing is typically data-driven, we need a process to establish test data.

Test execution occurs when we conduct the performance test, often by using performance test tools. We evaluate test results to determine if the system’s performance meets the requirements and other stated objectives. We report any defects.

In Test completion, performance test results are provided to the stakeholders (e.g., product owners) in a test summary report. We express the results through metrics (e.g., visual dashboards) which are often aggregated to simplify the meaning of the test results.

How do we categorize Performance risks for different architectures?

Application or system performance varies considerably based on the architecture, application, and host environment. While it is not possible that we provide a complete list of performance risks for all systems, the list below includes some typical types of risks associated with particular architectures:

Single Computer Systems

These are systems or applications that run entirely on one non-virtualized computer. Performance can degrade due to excessive resource consumption including memory leaks, background activities such as security software, etc. It can degrade also due to inefficient implementation of algorithms that do not make use of available resources (e.g., main memory) and as a result, execute slower than required.

Multi-tier Systems

These are systems of systems that run on multiple servers, each of which performs a specific set of tasks. It can be a database server, application server, and presentation server. Each server is, of course, a computer and subject to the risks given earlier. In addition, performance can degrade due to poor or non-scalable database design or capacity on any single server.

Distributed Systems

These are systems of systems, similar to a multi-tier architecture, but the various servers may change dynamically. For example, it can be an e-commerce system. In addition to the risks associated with multi-tier architectures, this architecture can experience performance problems due to critical workflows, etc.

Virtualized Systems

These are systems where the physical hardware hosts multiple virtual computers. These virtual machines may host single-computer systems. It can support also applications as well as servers that are part of a multi-tier or distributed architecture. Performance risks that arise specifically from virtualization include excessive load on the hardware across all the virtual machines. It can arise also the improper configuration of the host virtual machine resulting in inadequate resources.

Dynamic/Cloud-based Systems

These are systems that offer the ability to scale on-demand, increasing capacity as the level of load increases. These systems are typically distributed and virtualized multi-tier systems, albeit with self-scaling features designed specifically to mitigate some of the performance risks associated with those architectures. However, there are risks associated with failures to properly configure these features during initial setup or subsequent updates.

Client–Server Systems

These are systems running on a client that communicates via a user interface with a single server, multi-tier server, or distributed server. Since there is code running on the client, the single computer risks apply to that code, while the server-side issues mentioned above apply as well.

Mobile Applications

These are applications running on a smartphone, tablet, or another mobile device. Such applications are subject to the risks mentioned for client-server and browser-based (web apps) applications. In addition, performance issues can arise due to the limited and variable resources and connectivity available on the mobile device. Finally, mobile applications often have heavy interactions with other local mobile apps and remote web services, any of which can potentially become a performance efficiency bottleneck.

Embedded Real-time Systems

These are systems that work within or even control everyday things such as cars (e.g., intelligent braking systems), elevators, traffic signals, and more. These systems often have many of the risks of mobile devices, including (increasingly) connectivity-related issues since these devices are connected to the Internet. However, the diminished performance of a mobile video game is usually not a safety hazard for the user, while such slowdowns in a vehicle braking system could prove catastrophic.

Mainframe Applications

These are applications (in many cases decades-old applications) supporting often mission-critical business functions in a data center. They sometimes support batch processing. Most are quite predictable and fast when used as originally designed. However, many of these are now accessible via APIs, web services, or through their database, which can result in unexpected loads that affect the throughput of established applications.

Performance risks across the SDLC

For performance-related risks to the quality of the product, the process is:

- Identify risks to product quality, focusing on characteristics such as time behavior, resource utilization, and capacity.

- Assess the identified risks, ensuring that the relevant architecture categories are addressed. Evaluate the overall level of risk for each identified risk in terms of likelihood and impact using clearly defined criteria.

- Take appropriate risk mitigation actions for each risk item based on the nature of the risk item and the level of risk.

- Manage risks on an ongoing basis to ensure that the risks are adequately mitigated before release.

As with quality risk analysis in general, the participants in this process should include both business and technical stakeholders. For performance-related risks, we must start the risk analysis process early and is repeat it regularly. The tester should avoid relying entirely on performance testing conducted towards the end of the system test level and system integration test level. Good performance engineering can help project teams avoid the late discovery of critical performance defects during higher test levels, such as system integration testing or user acceptance testing. Performance defects found at a late stage in the project can be extremely costly and may even lead to the cancellation of entire projects. As with any type of quality risk, we can never avoid performance-related risks completely, i.e., some risk of performance-related production failure will always exist.

For example, in the case of an online store simply saying that customers may still experience delays during using the website isn’t helpful. It doesn’t give an idea of the amount and severity of the risk.

Instead of it, we can provide clear insight into the percentage of customers likely to experience delays equal to or exceeding certain thresholds will help people understand the status.

Performance Testing Activites depending on the SDLC type

We organize and perform the Performance testing activities differently, depending on the type of software development lifecycle in use. We will present them below.

Sequential Development Models

Sequential development models are the ideal practice of performance testing. It includes performance criteria as a part of the acceptance criteria which we can define at the outlet of a project. We should perform activities throughout SDLC. As the project progresses, each successive performance test activity should be based on items defined in the prior activities as shown below.

Concept – Verify that system performance goals are defined as acceptance criteria for the project.

Requirements – Verify that performance requirements are defined and represent stakeholder needs correctly.

Analysis and Design – Verify that the system design reflects the performance requirements.

Coding/Implementation – Verify that the code is efficient and reflects the requirements and design in terms of performance.

Component Testing – Conduct component-level performance testing.

Component Integration Testing – Conduct performance testing at the component integration level.

System Testing – Perform performance testing at the system level, which includes hardware, software, procedures, and data that are representative of the production environment. We can simulate system interfaces provided that they give a true representation of performance.

System Integration Testing– Conduct performance testing with the entire system which is representative of the production environment.

Acceptance Testing – Validate that system performance meets the originally stated user needs and acceptance criteria.

Iterative and Incremental Development Models

In these development models, such as Agile, we can see performance testing as an iterative and incremental activity. Performance testing can occur as part of the first iteration, or as an iteration dedicated entirely to performance testing. However, with these lifecycle models, the execution of performance testing may be performed by a separate team tasked with performance testing.

We perform commonly Continous Integration in iterative and incremental software development lifecycles, which facilitates a highly automated execution of tests. The most common objective of testing in CI is performing regression testing and ensure each build is stable. Performance testing can be part of the automated tests that we perform in CI if we design the tests in such a way as to be executed at a build level.

However, unlike functional automated tests, there are additional concerns such as the setup of the performance test environment. Moreover, additional concern such as determining which performance tests to automate in CI, and creating the performance tests for CI. Lastly, additional concern such as executing performance tests on portions of an application or system.

Performance testing in the iterative and incremental software development lifecycles can also have its own lifecycle activities:

Release Planning – We consider performance testing from the perspective of all iterations in a release. We may conduct it from the first iteration to the final iteration. We identify and access performance risks, and we plan mitigation measures.

Iteration Planning – In the context of each iteration, we perform performance testing within the iteration and when we complete each iteration. We access performance risks in more detail for each user story.

User Story Creation – User stories often form the basis of performance requirements in Agile methodologies. It can include the specific performance criteria described in the associated acceptance criteria. We call these “non- functional” user stories.

Design of performance tests –performance requirements and criteria which are described in particular user stories are used for the design of tests.

Coding/Implementation – During coding, we may perform performance testing at a component level. An example of this would be the tuning of algorithms for optimum performance efficiency.

Testing/Evaluation – While we typically perform testing in close proximity to development activities, we can perform performance testing as a separate activity, depending on the scope and objectives of performance testing during the iteration.

Delivery – Since delivery will introduce the application to the production environment, performance will need to be monitored to determine if the application achieves the desired levels of performance in actual usage.

Commercial Off-the-Shelf (COTS) and other Supplier/Acquirer Models

Many organizations do not develop applications and systems themselves, but instead are in the position of acquiring software from vendor sources or from open-source projects. In such supplier/acquirer models, performance is important consideration that requires testing from both the supplier (dev) and acquirer (customer) perspectives. Regardless of the source of the application, it is often the responsibility of the customer to validate that the performance meets their requirements. In the case of COTS applications, the customer has sole responsibility to test the performance of the product in a realistic test environment prior to deployment.

Summary

In this post, we could gather information about principal performance testing activities during the test process. Moreover, we could get information about the categorization of performance risks for different architectures and across the SDLC. We could also get some information about the performance testing activities depending on the SDLC type.

I hope you’ve enjoyed the article and found some interesting information.

The next post will arrive soon 🙂

The information in this post has been based on the ISTQB Foundation Level Performance Testing Syllabus.

Graphics with hyperlinks used in this post have a different source, the full URL included in hyperlinks.